I am reading this documentation from Mastodon.

And from it, I understand that Mastodon requires an HTTP Signature, signing at least these headers:

(request-target) host date digest

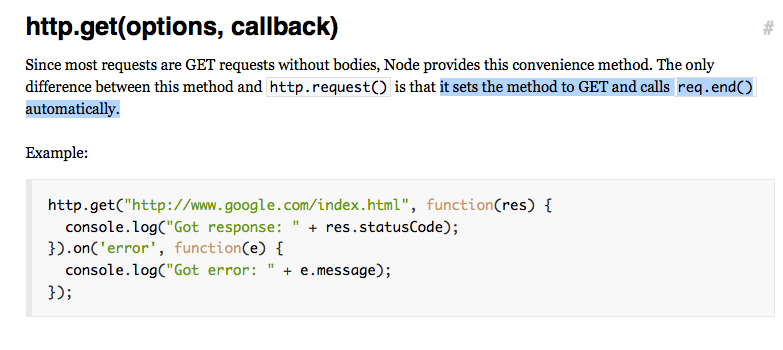

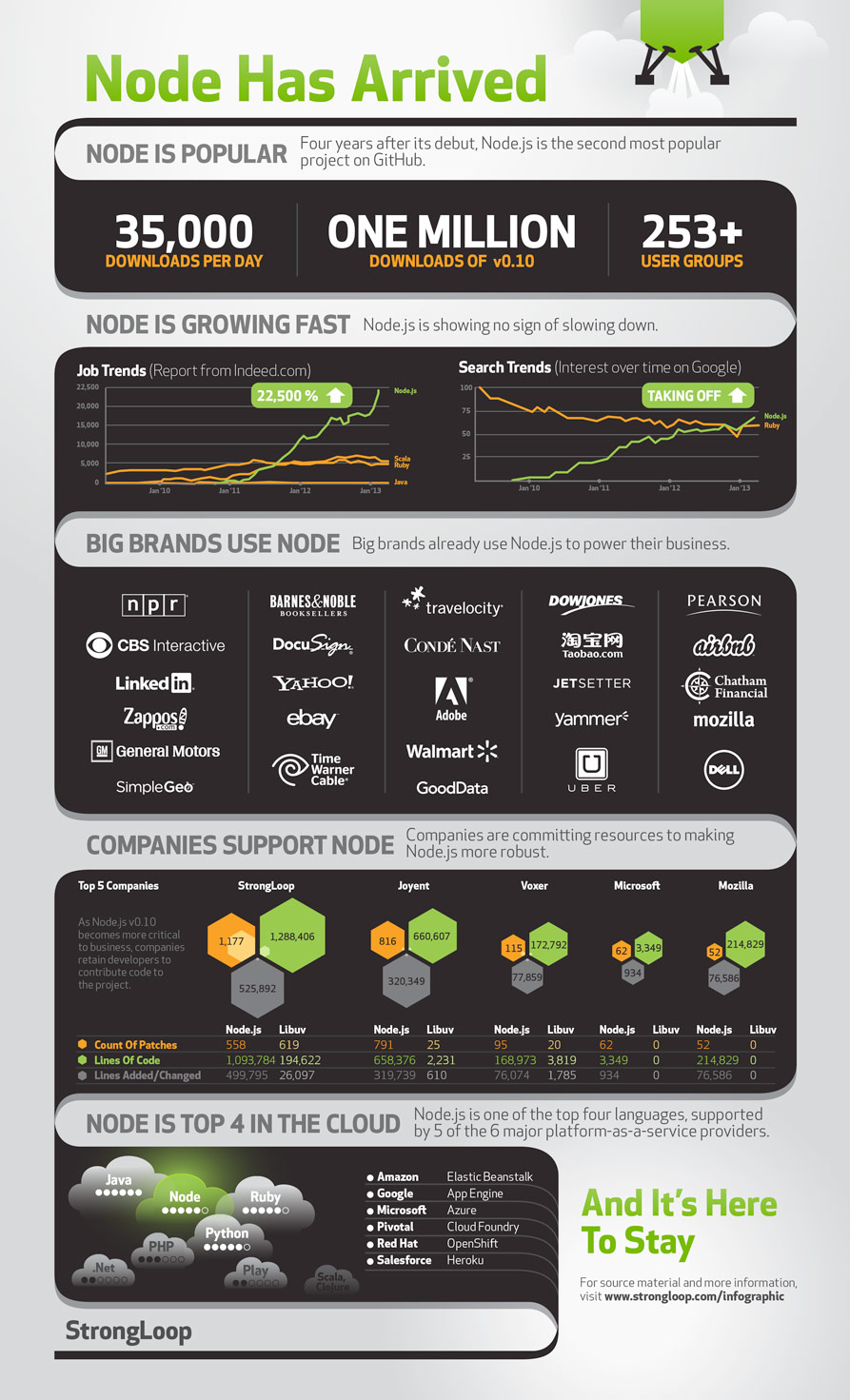

If your client is written in JavaScript and runs on Nodejs, an example for how to build a signature is given on the npmjs site.

But I believe this example is out of date. It does not use the (request-target) pseudo header. So that’s not gonna work.

So what must you do? Go back to the Mastodon documentation. Unfortunately, that too, is either out of date or confusing. For a post request, the mastodon documentation states that you must first compute the “RSA-SHA256 digest hash of your request’s body”. This is not correct. There is no such thing as “RSA-SHA256 digest”! RSA-SHA256 is not the name of a digest. Message Digests include: SHA1, SHA256, MD5 (old and insecure at this point) and others. According to my reading of the code, Mastodon supports only SHA-256 digests. The documentation should state that you must compute the “SHA256 digest”. (There is no RSA key involved in computing a digest).

Regardless of the digest algorithm you use, the computed digest is a byte array. That brings us to the next question: how to encode that byte array as a string, in order to pass it to Mastodon. Some typical options for encoding are: hex encoding (aka base16 encoding), base64 encoding, or base64-url encoding. The documentation does not state which of those encodings is accepted. Helpfully, the example provided in the documentation shows a digest string that appears to be hex-encoded. Unhelpfully, again according to my reading of the code, Mastodon requires a base64-encoded digest!

With these gaps and misleading things in the documentation, I think it would be impossible for a neophyte to navigate the documentation and successfully implement a client that passes a validatable signature.

- produce the POST body

- compute the SHA-256 digest of the POST body, including all whitepsace and leading or trailing newlines. Try this online tool to help you verify your work.

- Encode that computed digest (which is a byte array) with base64. This should produce a string of about 44 characters.

- Set the Digest header to be SHA-256=xxxyyyyy , where xxxyyyy is the base64 encoding of the SHA-256 digest.

- Set the http headers for the pending outbound request to include at least host, date, and digest.

- compute the signature following the example from the npmjs.com site, with headers of “(request-target) host date digest”, and using the appropriate RSA key pair.

If it were me, I would also include a :created: and an :expires: field in the http signature.

You can play around with HTTP Signatures using this online tool. That tool does not yet support computing a Digest of a POST body, but I’ll look into extending it to do that too.

Let me know in the comments if any of this is not clear.

I posted a working example for Nodejs as a gist on Github.

It depends only on nodejs and the builtin libraries for crypto and URL to compute the hash/digest and signature. It does not actually send a request to Mastodon; that is left for you to do.