I went out to Jimmy John’s the other night, to get a sandwich with a good buddy of mine. It was pretty fast. I ordered a standard ham-and-cheese sandwich, and my buddy got an Italian hoagie with banana peppers. They were quite tasty! I guess we were in the store, conducting this transaction, for less than 5 minutes. Pretty fast. And easy.

Easy wins. People will always choose the easier option. People are willing to pay more, or receive less, in order to get something easy. “Fast food” is cheap, but it isn’t the cheapest. Often it’s even less expensive to buy ingredients at the grocery, and prepare a meal.

We paid about $USD 15 for 2 sandwiches. (We didn’t order drinks because soda is toxic sludge and doesn’t belong in the human diet.) Now, I could have gone to the grocery across the parking lot, purchased a pound of ham, a loaf of bread, and some lettuce and tomato for about $20, and had plenty of ingredients to make 5 sandwiches or more. Instead, we paid for the convenience and we were happy to do it.

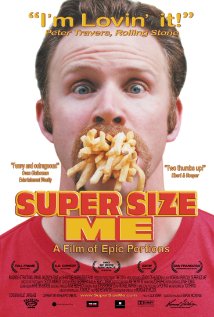

But fast food does not always deliver the best nutrition. It’s easy, and it might even be “tasty”, but sometimes it’s not good. (and yes, I know that the film “Super Size Me” was criticized for being less than 100% truthful, and less than scientific about the analysis.) In a free economy, for every potential purchase, the consumer decides whether the combination of ease, quality, and the price of the thing on offer represents a good deal. Generally, “easy” is worth good money.

And that makes sense. People have things to do, and for some people, preparing food is not high on the priority list. Likewise, people will pay $50 to get the oil changed in their car, even though they could do it themselves with about $28 worth of supplies. People pay for convenience when maintaining their cars.

And the same is true when managing and operating information systems. People will often sacrifice quality in order to gain some ease. Or they may pay more for greater ease. That’s often the right choice.

The adoption of Windows Server in the enterprise, starting in 1996 or so, was a perfect example of that. People had so-called “Enterprise Grade” Unix-based options available. But Windows was simple to deploy, easy to configure for basic file and printer sharing, and database access. Who can argue with those choices? Sure, there were drawbacks, but even so, the preference for easier solutions continues.

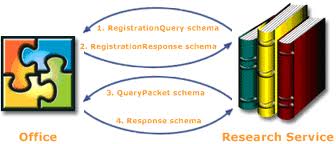

As another example, these days, for interconnecting disparate information systems, REST APIs are much, much more popular than using SOAP. There are numerous indicators of this trend, but one of them is the Google Search Insights data:

(Can you guess which line in the above is “REST” and which represents the search volume on “SOAP”?) The popularity of REST may seem curious, to law-and-order IT professionals. SOAP provides a means to explicitly state the contract for interconnecting systems, in the WSDL document. And WSDL provides a way to rigorously specify the type of the data being sent and received, including the shape of the XML data, the XML namespaces, and so on. SOAP also provides a way to sign documents, as a way to provide non-repudiation. In the REST API model, none of that is available. You’d have to do it all yourself.

But apparently, people don’t want all that capability. Or better, they may want it, but they want ease of development more. When given the choice to opt for greater capability and more strictures (SOAP), versus a simpler, easier way to connect (REST APIs), people have been choosing the easy path, and the trend is strengthening.

APIs are winning because they’re easy.